About

I’m Micha Heethoff, a staff scientist in Nico Blüthgens Ecological Networks group at the Technische Universität Darmstadt (TUDA, Germany). Being interested in the functional 3D-morphology of miniaturized animals (mites with a body size of below 1 mm), I started to work with Synchrotron-X-ray-microtomography (SR-µCT) in 2005. Later, our research group started aiming at 3D models of pinned insects with real-color textures. Hence, we needed another technique and wanted to combine photogrammetry with extended depth-of-field and multi-view imaging. For this task we developed the Darmstadt insect scanner (DISC3D, see Fig. 1) together with Bernhard Ströbel (h_da: Darmstadt University of Applied Sciences). We have recently further teamed up with curators from the Hessian State Museum Darmstadt (HLMD) and founded the nonprofit association DiNArDa (Digital Natural History Archive Darmstadt) that aims at further developing DISC3D to become easily available for every museum, research group or private person that wants to digitize insects or other small objects.

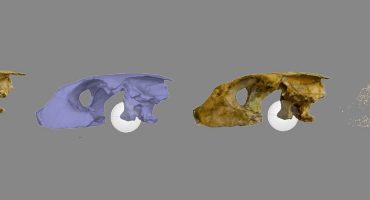

I have been working with SR-µCT at different facilities (ESRF, Grenoble, France; ANKA, Karlsruhe, Germany; DESY, Hamburg, Germany), always focusing on the morphology of small arthropods, mostly mites and insects. SR-µCT is a great technique for high-resolution and non-destructive 3D imaging and provides information about the outer and inner organization of an object. The resulting volume data (voxels) needs to be processed to isolate specific structures of interest. This can be done by volume rendering (or thresholding, e.g. Economolab shows numerous isosurfaces of CT scans of ants at Sketchfab) or segmentation (see here for great advances in semi-automatic segmentation). While isosurfaces are a quick way to generate surface models from CT-data, they usually don’t result in solid watertight models, and furthermore add a certain number of structures/polygons inside the model.

Whatever processing is applied, CT data consist of grey values only and it is not possible to record the real color of an object. Furthermore, access to synchrotron facilities is highly competitive, and Micro-CT scanners are very expensive and only available at a few facilities. Furthermore, when it comes to pinned insects (they are usually preserved on a steel needle, see Fig. 2), the needle produces severe artifacts in the tomograms that have to be removed in a time-consuming process.

Hence, when it comes to large scale digitization of pinned insects (such as exist in the millions in museum collections), an automated, affordable and available technique is mandatory. Furthermore, the inner organization (organs, musculature, nervous system, etc.) of the air-dried and often times pretty old specimens seems to be of limited value.

Michael Heethoff (left, author, co-developer of DISC3D), 3D-morphologist (SR-µCT, SFM).

Michael Heethoff (left, author, co-developer of DISC3D), 3D-morphologist (SR-µCT, SFM).Bernhard Ströbel (right, developer of DISC3D)

DISC3D delivers hundreds of extended-depth-of-field (EDOF) images from different viewing angles in a fully automated process. These sharp and highly resolved images can be used for scientific purposes (visual inspection of the morphology from virtually any viewing angle), so that taxonomists no longer need to touch and access the physical specimens, at least as long as no further mechanical treatment (e.g., preparation of genitals) is needed. Furthermore, the images provide an ideal basis for photogrammetric reconstructions (uniform/masked background, EDOF, known camera positions) resulting in scaled, watertight, and textured 3D-models. We use Agisoft Photoscan Pro with visual consistency for mesh generation (the process is fully described in our open access paper).

The major challenge was generating EDOF images with a correct perspective (following the pinhole camera model that is obligate for photogrammetry), and this was solved by imaging a calibration target and applying the information (shift and scale) to the scanned specimens.

Here are some of my favourite models generated with DISC3D – and not all are insects ;-)

What we learned in the last years during the development: It’s worth spending time for the best images you can get, mask only some of them, and then use the strict volumetric masking in Photoscan – this is the fastest approach and provides the best results (at least for our kind of data). Why do we use Agisoft Photoscan? Because import and export of cameras is possible and the visually consistent mesh generation is, as far as I know, currently unique.

I’m convinced that the future will bring more and more virtual 3D collections (virtual museums), and make them accessible to the public (see several examples here on Sketchfab, e.g., IVL Paleontology, UOD Museums). Especially for small (such as insects) and unique objects this will provide new perspectives in education and exhibition conceptualization.

Why Sketchfab?

I found Sketchfab while searching for a way to visualize 3D-models of insects on a platform that is freely available to everybody. And I realized that there are only few good real (scanned, not designed) models of insects available (and those are mostly large specimens). I was impressed by the great number of options and quick visualization, so I gave it a try and started to upload some of our models – and received immediate positive feedabck ;-). If I had a wish: it would be great to have some measuring options in the viewer (surface, volume, distances)…

Here are some of my favourite models on Sketchfab, that show how 3D-models can help to visualize and understand animal morphology:

Mountain lion:

This skeleton was reconstructed from single bones that have individually been scanned.

Climbing gecko:

Snake skull:

This segmented model is a wonderful example how 3D-information can be useful to teach anatomy.

Micha’s Website / Disc3D Website